Gesture and Sign Language

Overview

- Extending the infrastructure used from Lab In A Box, I combined Kinect and Lead Motion to quantify the differences between sign-language and co-speech gestures.

- I built novel 3D visualizations and analytics of hand motion data collected during experiments from Kinect, showing the differences between how people move when using American Sign Language vs. co-speech gestures (moving while speaking a verbal language).

Outcomes

- I integrated Leap Motion hand tracking and data capture into the Lab In A Box software infrastructure.

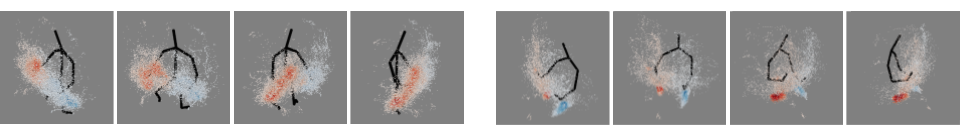

- I generated analyses and visualizations that showed ASL signers use a much larger and sparser area of hand motion than non-signers, moving widely around their upper chest and lower face.

Details

- While extending the Lab In A Box infrastructure to support additional devices (Leap Motion) I learned a lot about software design across Operating Systems. Initially only built for Windows, I began working with more and more Mac OS users, this planted seeds that would lead to ChronoSense being designed as an OS-agnostic platform.

- I built out a number of visualizations and analysis approaches for making sense of the data being collected. Most notable was my 3D density maps, which showed that ASL signers use a much more widely spread out space to communicate than non-signers. This is shown below with the ASL signer on the left and the non-signer on the right.

Artifacts

Skills

- Multimodal Data Analysis (Python)

- Information Visualization (Python)

- Body Tracking and Hand Tracking

- Software Development (C#)